RENÉE DIRESTA

NOVEMBER 8 2018

I. INTRODUCTION

In this essay, Renée DiResta argues that a confluence of three factors – mass consolidation of audiences onto a handful of social networks; the adoption of curatorial algorithms as a primary means of disseminating and engaging with content; and the ease of precision targeting of users via the leveraging of proprietary profiles built from their own media consumption signals – has resulted in an information ecosystem that can be manipulated by a variety of actors with relative ease. This paper looks at the social media presence of one specific group of actors, American anti-vaccine activists, as a case study to better understand strategies, tactics, and impact, as well as counter-messaging and interventions.

Renée DiRestais the Director of Research at New Knowledge, and Head of Policy at nonprofit Data for Democracy. Renee investigates the spread of disinformation and malign narratives across social networks, and assists policymakers in understanding and responding to the issue. She co-founded Vaccinate California.

This paper was presented on October 20, 2018 to the Social Media Storms and Nuclear Early Warning Systems Workshop held at the Hewlett Foundation campus. The workshop was co-sponsored by the Nautilus Institute, the Preventive Defense Project—Stanford University, and Technology for Global Security, and was funded by the MacArthur Foundation. This is the first of a series of papers from this workshop. It is published simultaneously here by Technology for Global Security. Readers may wish to also read REDUCING THE RISK THAT SOCIAL MEDIA STORMS TRIGGER NUCLEAR WAR: ISSUES AND ANTIDOTES and Three Tweets to Midnight: Nuclear Crisis Stability and the Information Ecosystem published by Stanley Foundation.

The views expressed in this report do not necessarily reflect the official policy or position of the Nautilus Institute. Readers should note that Nautilus seeks a diversity of views and opinions on significant topics in order to identify common ground.

This report is published under a 4.0 International Creative Commons License the terms of which are found here.

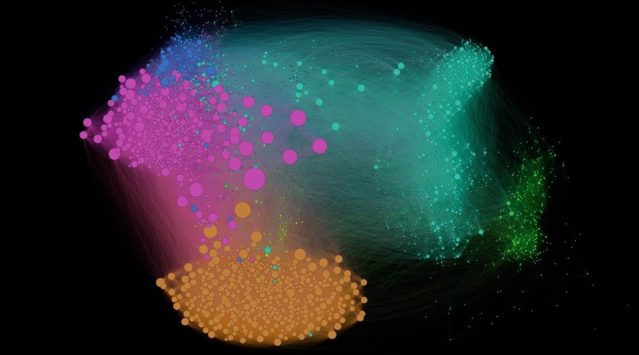

Banner image: https://www.acpjournals.org/doi/10.7326/M23-1218 and by Gilad Lotan from here

II. NAPSNET SPECIAL REPORT BY RENÉE DIRESTA

OF VIRALITY AND VIRUSES: THE ANTI-VACCINE MOVEMENT AND SOCIAL MEDIA

NOVEMBER 8 2018

Vaccines are a victim of their own success. Absent the sight of individuals afflicted by communicable diseases, increasing numbers of people seem to be more afraid of vaccines than of the diseases they prevent.

This is, in part, because what they do see is misinformation. Anti-vaccine misinformation is prevalent on all major social platforms. Despite the fact that real-world numbers of anti-vaccine proponents are still a small minority, on social media they appear to hold the majority viewpoint. Since vaccination efforts rely on cumulative uptake for success, expanding numbers of anti-vaccine proponents is a threat to public health. A certain percentage of the population must be immunized for the community to be protected; this principle is known as “herd immunity,” and when vaccination rates dip below it, infectious diseases can take hold.

Misinformation has existed on the internet for as long as the internet has existed, of course, and the anti-vaccine movement preceded the internet. It’s been around since the development of vaccines in Europe, in the mid-1700s. Although anti-vaccine narratives may not have changed much since then, one thing that’s different now is the way we distribute information. The internet democratized flows of information, making it possible for anyone with a point of view to reach vast numbers of people relatively easily. Anti-vax narratives perform particularly well on social media, where algorithms reward emotionally-engaging personal anecdotes and sensational content — not dry scientific facts.

Messaging Against Medicine

Anti-vaccine activists established their narratives early on; in fact, the misinformation themes have remained largely unchanged since the early 1800s.

First, there are the pseudoscience claims: asserting that vaccines cause SIDS, autism, and other serious side effects, the movement’s activists spread the repeatedly-debunked message that vaccines are unsafe and potentially toxic, and that the shots themselves are more harmful than the diseases they guard against. They advocate for more “natural” alternatives that sound safer and reassuring, such as hand washing and taking vitamins; these options are contrasted with statements highlighting vaccine ingredients with scary-sounding chemical names.

But a new class of messaging began to emerge on social media as anti-vaccine activists on the left (often stereotyped as “crunchy hippie” California moms) began to shift their messaging to increase their appeal to the libertarian right. They argue that people should have their own freedom of choice, claiming that vaccination requirements for school are government overreach, that vaccination requirements for work are a violation of civil liberties.

Many — an increasingly vocal group — go even further and delve into the conspiratorial, claiming that the U.S. government and the medical establishment are all part of a vast cover-up concealing the cause of autism. This is likely a result of the continued erosion of trust in authority; this content often appears as “vaccine truth.” They talk about the New World Order and the Soros-backed depopulation agenda.

And finally, many tap into the public resentment and distrust of corporations, particularly pharmaceutical companies who, they claim, are only out to make a profit, not help people. Pro-vaccine activists and doctors are also often labeled as “bought” and untrustworthy.

This kind of messaging has an impact. One recent example in the United States is the case of the Somali community in Minnesota.

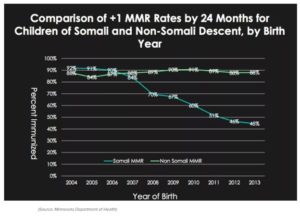

The Somali immigrant community in Minnesota became concerned that their children were being diagnosed with autism; they told doctors that autism was nonexistent in Somalia. Internet-based anti-vaccine activist groups with local chapters — the Vaccine Safety Council of Minnesota, Minnesota Natural Health Coalition, National Health Freedom Action and Minnesota Vaccine Freedom Coalition and The Organic Consumers Association — formed a coalition, and repeatedly invited anti-vaccine influencers (including Andrew Wakefield) to “educate” the immigrant community. They spread anti-vaccine propaganda on social networks, and reinforced it via in-person meetings with the community over a period of years. The Somalis became convinced that the MMR vaccine caused autism; the result was a dramatic decline in immunization. Coverage dropped from 88% to 42% immunized in 2013; in 2017, the community experienced a measles outbreak that infected over 70 children.

As the disease spread, press began to investigate, and interviewed the Somali immigrant community.

“Sayid said she doesn’t oppose vaccines in general — she plans to get her oldest vaccinated at 7 and the younger two at age 4 — but she’s heard that early vaccination can damage an infant’s language skills.

“The internet and people in the community told me MMR causes autism,” Sayid said. “Everybody is afraid.”

Between 50% and 80% of people search for health-related information online each year, including information about vaccines. Very few discuss their concerns with medical experts; they simply read and internalize the messages they see. Repeated exposure to misinformation appears to have an impact. As one recent paper studying the HPV vaccine conversation on Twitter found,

Vaccine coverage was lower in states where safety concerns, misinformation, and conspiracies made up higher proportions of exposures, suggesting that negative representations of vaccines in the media may reflect or influence vaccine acceptance.

Social platforms and their gameable algorithms have provided a space for the anti-vaccine movement to thrive. Search functions and recommendation engines proactively surface anti-vaccine communities and content. Social networks have profoundly transformed communication, and the anti-vaccine movement is capitalizing on the new infrastructure of speech to amplify its growth and reach new audiences.

How Misinformation Moves

Zero-cost publishing has been around since the early days of the internet; sites like Geocities and Blogger gave everyone the ability to write and post whatever they wanted online for free. This of course meant that there was misinformation online — including anti-vaccine misinformation. But in the decentralized days of the early blogosphere, that was largely irrelevant; it was very hard to find things, and they didn’t spread very easily. True believers who were looking for the content might find like-minded people, but things didn’t spread very easily. Activists and people promoting causes still attempted to develop and grow audiences through real-world events headlined by celebrities, and via earned media mainstream news coverage.

In the early 2000s, the social networks appeared. Relatively quickly, they grew into large communities, with hundreds of thousands, then millions, and now — in the case of Facebook — 2 billion users. The sites ran advertising-based business models that incentivized them to keep users on their site and engaged for as long as possible. To facilitate that, platforms gather data on their users, partially to monetize ads by way of efficient ad targeting, but also to understand what kinds of content users would want to see and engage with. They developed algorithms to sort and rank and recommend content, for the purpose of driving engagement. Facebook, Twitter, YouTube — the offerings may vary by feature and purpose, but the underlying needs of the ads-based business model are very similar.

Early content creators of the internet recognized the distribution channel potential of the social platforms. Large media entities and self-anointed “citizen journalists” alike flocked to the platforms, which built sharing tools and virality engines and Like buttons to help them spread their content to new audiences. The platforms wanted content to keep users engaged; the content creators wanted the platform’s audiences to monetize and, in many cases, simply to reach.

The social companies slowly expanded, each spidering out from its original core feature set into new areas by way of acquisitions as well as development. Originally, people brought their social network to Facebook; with the creation of Pages and Groups, they can now grow a network on Facebook, connecting with like-minded people and pages suggested to them by a recommendation engine. Truther communities recognized the potential of social networks; 9/11 truthers are one example of a group that consolidated and gained visibility early on. Conspiracy theorists, who have little access to mainstream media, are often early adopters of new forms of social media. Anti-vaccine activists have been at the forefront of leveraging all of the technological features social platforms provide.

A Multi-Channel Marketing Strategy

The anti-vaccine movement is well-funded and technically savvy. They followed the best practices of internet marketers, writing blogs and cross-promoting content and sharing material across all of the new platforms. Social network design choices meant that popularity determined what people saw; even nuanced policy issues began to be run as digital marketing campaigns.

Facebook: Anti-vaccine activists use all of Facebook’s features to grow and reach large audiences, and to build community. Organizers buy ads to promote their Pages and Groups; anti-vaccine keywords appear as suggested options in Facebook’s interest-based targeting tool, and vaccine truthers target new parents and, very deliberately, pregnant women. They leverage Facebook Live for real-time communication with audiences. Facebook Pages and Groups are used to coordinate, engaging supporters in everything from advocating on Twitter for regional legislative initiatives, to harassing pro-vaccine doctors. Facebook’s recommendation engine proactively promotes anti-vax groups; its search results for terms such as “vaccine” return almost exclusively anti-vaccine results.

Instagram: Owned by Facebook, Instagram functions in the movement primarily as a tool for sharing memes and short video clips. Users leverage multiple hashtags, creating discoverability and cross-promoting content to other potentially receptive audiences (ie, using #GMO or #geoengineering to push their content into the feeds of anti-GMO or chemtrail conspiracy theorists).

Twitter: Anti-vaccine activists use Twitter to spread their message to the broader public, to get celebrity influencers to amplify them, and to reach the mainstream media. They coordinate retweet and follow-back rings to increase their reach. They deliberately attempt to co-opt hashtags created by public health officials (such as #VaccinesWork), and often piggyback on large audiences paying attention to other topics (that is, tweeting vaccine content into #BlackLivesMatter). Twitter harassment in the form of brigading, making unflattering memes, and making threats are also common on Twitter. Anti-vaccine activists also run automated and partially-automated accounts.

YouTube: There are, at a minimum, hundreds of YouTube vaccine truther videos dedicated to “exposing the truth” about immunization risks, deaths, etc. First-person testimonials from emotional parents describing their child’s harm from a vaccine are highly ranked in YouTube search results and appear in autoplay. There is a proliferation of videos that provide anti-vaccine parents with instructions on how to evade state vaccine requirements.

Pinterest: Pinterest is a social network for creating and sharing visual bookmarks; it acts a site for storing as well as sharing favorite articles. Users comment, like, and repin content to help spread anti-vaccine narratives. In 2015, researchers reported that 75% of the vaccine-related posts on Pinterest are negative.

Google Search: Google Search is facilitating the spread of vaccine misinformation in two primary ways. First, the platform makes suggestions via its autocomplete function. Starting to type “vaccine,” for example, autopopulates with “causes autism,” reinforcing conspiratorial narratives and potentially prompting users in specific directions. Second, search rankings for thin terms (those without many results) will often return propaganda in response to medical queries, with potentially disastrous results. Popular anti-vaccine mommy blogs outrank the Centers for Disease Control in the results for “vitamin k shot.” The ranking suggests that popular sites, or sites with good SEO, are high-quality; in reality they are full of health misinformation and propaganda. The algorithm doesn’t account for whether an article is factually accurate.

Cross-platform sharing features allow users to grow their social media presence and create distribution channels easily. A single person, for example, can Tweet about a Facebook Page, share a YouTube video and a link to an anti-vaccine book on Amazon; a dedicated group will often push a hashtag and create a labyrinth of accounts and messaging that are difficult to moderate or debunk. Incorporating bots — yes, the anti-vaccine movement runs bots — lets them reach even further and amplify real human posts with automated content. The messaging is engaging and sticky, and posts receive hundreds of shares and comments.

Anti-vaccine activists are active and easily-discoverable on all social platforms and have been for quite some time. They use repetitive messaging reinforcement and widespread distribution with the goal of manufacturing consensus, and take advantage of the amplification that social platforms give anti-vaccine activists due to easily manipulated algorithmic curation systems. Google and Facebook algorithms inadvertently create the illusion of fact and truth out of mere ubiquity; if you make it trend, you make it true.

Influencer Marketing to Increase Media Reach

To help their content rise to the top, the anti-vaccine movement leverages thought leaders who drive narrative development and use their influence on various media channels to disseminate messages to a highly engaged, passionate rank-and-file. One 2017 paper, “Vaccine Rejection and Hesitancy: A Review and Call to Action,” examined several categories of influencers in the anti-vaccine movement, including a small collection of medical professionals (many of whom were trained in unrelated fields, or had their theories or articles discredited or retracted), celebrities, and community organizers. They found that their combined audience exceeded 7 million Facebook followers (although overlap is probable).

The prominent Facebook Groups and Pages include legislative action centers such as the National Vaccine Information Center, which actively lobbies to weaken, eliminate, and block vaccination requirements for school children. The NVIC is supported by a network of individual state “Vaccine Freedom” and “Vaccine Choice” groups (ie, Texans for Vaccine Choice) that consist of a few dozen to a few thousand local grassroots activists who organize online to lobby — and sometimes harass — legislators in real life as well as on Facebook and Twitter.

There are also Groups and Pages led by individuals with financial motivation, such as Stop Mandatory Vaccination, run by an individual who self-identifies as a citizen journalist with an ad-funded blog who sells homeopathic and “natural health” supplements. Stop Mandatory Vaccination has a fee-based community membership model, and is always running a handful of GoFundMe campaigns. Similarly, there is The Truth About Vaccines, which has a community but is largely an affiliate-marketing effort organized around an 8-part “vaccine truth” miniseries. The team has a similar program called The Truth About Cancer, which is widely regarded as predatory cancer quackery. Both create extensive amounts of content on YouTube.

And, of course, there are the celebrities, influencers, and media personalities, most notably Andrew Wakefield, a discredited former physician responsible for the original retracted 1998 study in The Lancet claiming that MMR vaccination causes autism. He is one of the creators of the 2016 documentary “VAXXED,” which runs extensive citizen media efforts on social channels including Facebook Pages, Groups, a YouTube channel, Periscope, Twitter, and more. High-profile celebrities who have vocally supported him have included Elle Macpherson, Jenny McCarthy, Jim Carrey, Jenna Elfman, Robert DeNiro, Selma Blair, Kirstie Alley, Donald Trump, Rob Schneider, and Esai Morales.

Other notable influencers active in sharing anti-vaccine misinformation are people with large audiences whose primary mission is not anti-vaccine, but who have strong philosophical alignment and audience overlap — affinity marketing, in a sense. These include Joseph Mercola, author, former physician, and supplement salesman who runs the website Mercola.com, Alex Jones and the team of InfoWars, a supplement-selling conspiratorial news organization with radio, TV, and digital distribution that was recently kicked off of most social media platforms; Mike Adams, the “Health Ranger” of website Natural News, which also sells supplements and pushes conspiracy theories about vaccines, Ebola, HIV, chemtrails,GMOs, and myriad other health issues; David Avocado Wolfe, who has 12.5 million Facebook followers alone, and whose page is purportedly health and nutrition focused but still delves into vaccine-related conspiratorial content.

Solutions

This playbook is about far more than vaccines. The same kind of strategies involved in push narratives across the social ecosystem are also used by everyone from ISIS terrorists to domestic political ideologues. The same kinds of audience building, meming, ad development and cross-platform content strategies were used by the Russian Internet Research Agency for the purpose of sowing social division from 2014-2017. Any highly motivated group can take advantage of the structural flaws of the social ecosystem, and attempt to manipulate public opinion and manufacture consensus.

Understanding how these systemic information flows work is a prerequisite to eliminating the ability for malign actors to run these campaigns, and reducing the impact that they have. However, this presently relies wholly on the tech platforms — and they are struggling to decide how to respond.

The medical establishment is also trying to figure out how to respond to anti-vaccine propaganda online, and to concerned parents who decline immunizations in their offices based on misinformation that they’ve read online.

When parents refuse or delay vaccinations for their children, it puts physicians in a challenging position. Some doctors expel anti-vaccinating families from their practices, but the ethics and impact are a topic of debate and the children are much more likely to remain unvaccinated. Most providers take the route of attempting to have a dialogue and to assuage concerns, respectfully inquiring about the reasons for their doubts in the safety of vaccines. Similar to discussions involved in anti-smoking or deradicalization programs, this one-on-one approach is more compassionate and empathetic than confrontational. It acknowledges parental fears and engages them in a non-threatening conversation. The hope is that building a relationship built on trust and respect may succeed in getting parents to reconsider, or participate in a slower vaccination schedule that, while not ideal, still ultimately protects the child.

Besides the one-to-one approach, there is the community-centric approach. Grassroots groups create presences on social media to counter the proliferation of anti-vaccine narratives and offer a space for questioning parents to engage with positive parental voices. In Washington, VaxNorthwest has created a program called ImmunityCommunity in which parents who support vaccination are trained to educate those who are vaccine hesitant. Direct and community engagement has demonstrated the highest degree of success. Unfortunately, these solutions don’t necessarily scale to internet speed; increasing numbers of medical professionals are joining Twitter and other services, but it takes time to build an audience and distribution infrastructure.

Taking No Action Is Making A Choice

While one-on-one and community action, or growing counter-movements online, are potential good solutions, they take extensive money, effort, and time. There is another option, which we can (fittingly) draw from epidemiology: the platforms themselves can do more to stop the transmission of false narratives, and to inoculate people. They can audit their algorithms to better understand how these narratives spread, and the downstream impact they have. Google frequently states its commitment to returning hiqh quality results for “Your Money or Your Life” issues. It has vastly improved results in Search through “cards” and features that populate with information from Wikipedia and other validated sources; however, YouTube still returns, autoplays, and recommends anti-vaccine propaganda. Facebook has attempted to offer third-party fact-checking services alongside misinformation articles that appear in a user’s newsfeed (perhaps a form of inoculation against belief, though results have been mixed). And yet, he company primarily refuses to comment on why it allows anti-vaccine hoaxes to spread on the site, accepts money from anti-vaccine ads that actively promote health misinformation, or why it tacitly promotes anti-vax groups by pushing them via the recommendation engine.

By assisting anti-vaccination groups in growing their numbers and spreading their messages, social platforms are having a detrimental impact on public health, and on the lives of babies whose parents are misled into believing anti-vaccine misinformation. Only they know the full measure of what’s happening on their internal platforms: whether people are proactively searching for these groups, or responding to the power of algorithmic suggestion; how fast and far content is spreading; what percentage of the content that susceptible or targeted users see is misinformation. It’s time we had a better understanding of the dynamics and pathways at work, because this problem is far larger than vaccines.

III. NAUTILUS INVITES YOUR RESPONSE

The Nautilus Asia Peace and Security Network invites your responses to this report. Please send responses to: nautilus@nautilus.org. Responses will be considered for redistribution to the network only if they include the author’s name, affiliation, and explicit consent

34 thoughts on “OF VIRALITY AND VIRUSES: THE ANTI-VACCINE MOVEMENT AND SOCIAL MEDIA”